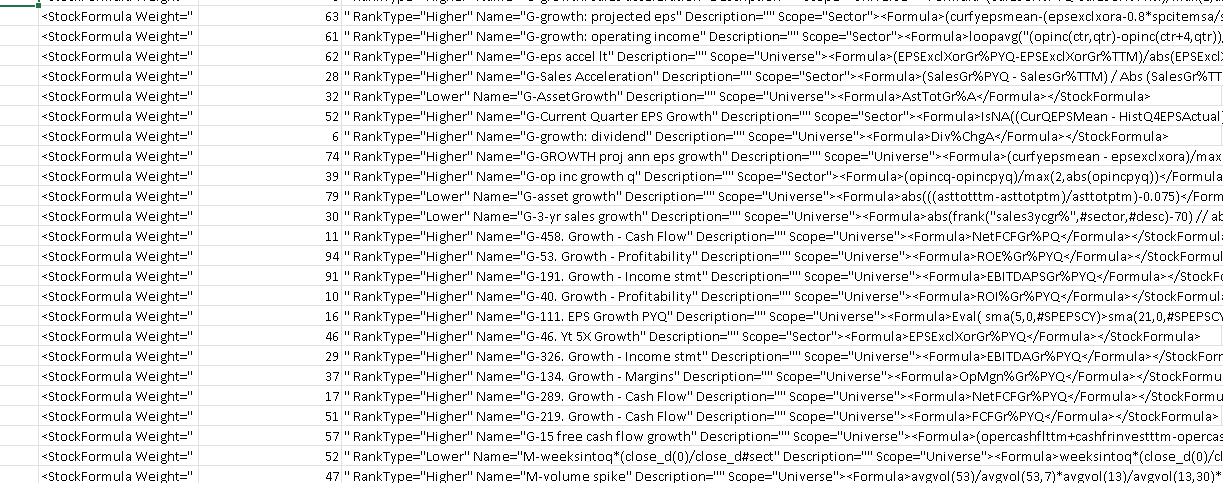

I have 90 nodes that I believe in, which I want to (over)optimize the weight of in a ranking system. I want to test it 4 sub-universes.

I can then weight up-down nodes by ±2-12% by creating thousands of ranking systems. (See the description of Yuval below.) It will probably take months to do this manually.

(I can use Excel and " [text editor]" and " =RANDBETWEEN([LowerLimit],[UpperLimit])" to speed up this a bit:

Is it possible to do this automatically? Has anyone created a Phyton code and API that can do this automatically? Will OPTIMIZER work to do this?

I don’t need to automate the process for the screener or the simulator. A performance test for the ranking system will suffice. Much like the Danp spreadsheet that applies to one node at a time, but something similar for multiple, but entire ranking system: Single factor testing tool developed using the API - #26 by Whycliffes

It doesn’t look like I can test multiple ranking systems with Dataminer: DataMiner Operations - Help Center

Otherwise, I there are several good threads that deal with the questions of automation, weighting, and optimisation below:

“Here’s what I’d recommend. Assign every node a weight divisible by 4. Make changes in the weights to see if returns improve, but always keep every node’s weight divisible by 4. Once you’ve optimized the weights through slow trial-and-error, do it again but make each node’s weight divisible by 2. Stop there.”