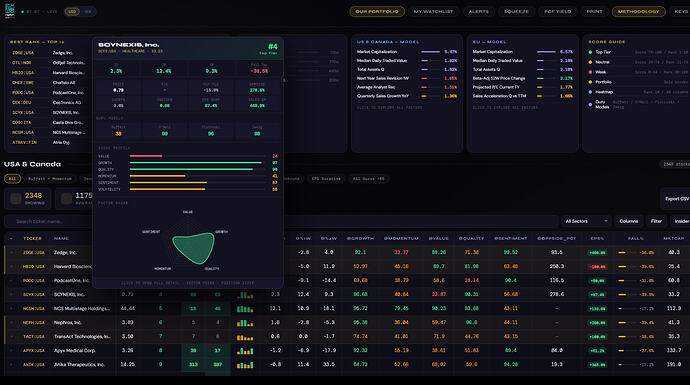

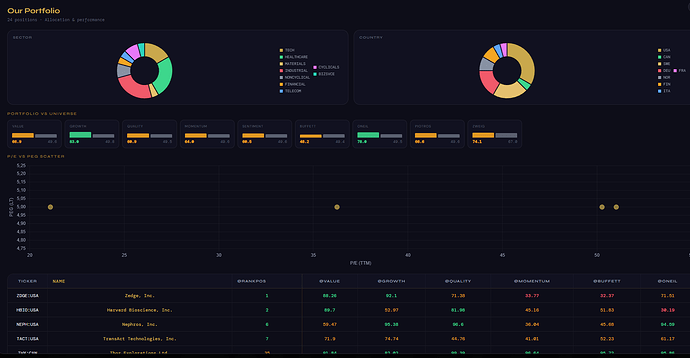

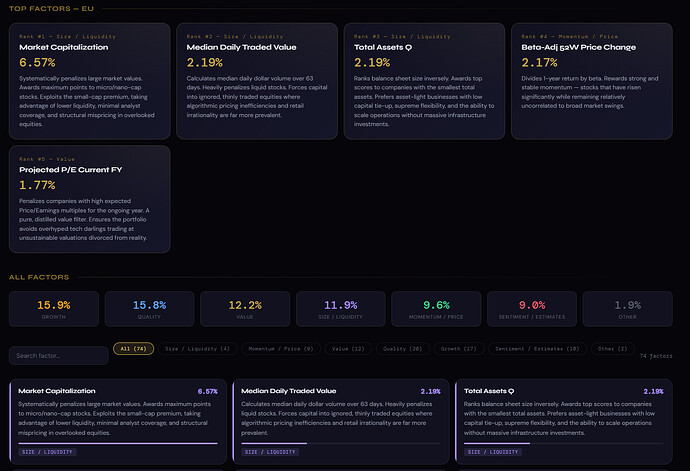

I cannot code, but it's fascinating how easy it has become with AI; here, just for testing, I created a dashboard:

I agree. These AI tools have come along way, especially if you combine Opus 4.6 and Codex.

Professionally, I work at an investment firm. One of our portfolio companies is one of the largest bus companies in north western Europe. Rides were still being combined and planned by hand. That took 5-10 people solving a logistical problem.

But now, within a few days - a whole planning tool has been created that automatically converts individual rides into a complete planning. It determines the number of buses to be used, the number of drivers, when they have breaks, where the bus will park. And all visualised in a dashboard much like you show here. All with the latest AI tools.

These things are becoming practical now.

Also, based on print screen 1. We all hold similar positions and probably trade at similar times.

I also agree. I built a tool that helps immensely with my process for trading models, handling currency conversions, applying hedges, etc. What was once a fairly fraught process begging for a disastrous fat-finger or similar mistake has become almost self-running.

Also interesting is Victor's bus company anecdote. My son's school bus company doesn't allow us to indicate when he doesn't need a ride, so the bus will come by whether he's getting on or not. Fair enough, I suppose. However, it also comes to drop him off after school, even if he isn't on it! Every time I see that, it drives me mad. I wish they’d apply some AI or even a touch of logic to their process.

Crazy how we are all using this at once. I wonder how it will affect P123 AI 3.0 if not P123 AI 2.0 and also P123 AI apps if that is a separate thing.

Seems like more people will be able to create useful apps. But I think more important than that is the ability to chain apps together. Either at home with Claude Code, OpenAI Codex (or something else) or for P123 to do it with P123 AI 3.0 say.

Super nice. I made one this week too. I’m out and about, will share it with the community when I’m back by my computer.

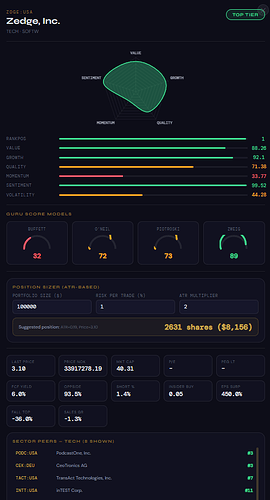

What I don’t get is how we’re all pulling Zedge. Do they really make money in 2026 off ringtones and AI generated wallpapers?

The stock ranked highly due to strong EPS and sales growth in the prior-year quarter, a low P/S ratio, and a gross margin of 93.51% — all factors commonly rewarded by P123 ranking systems.

That said, Zedge's earnings today were disappointing, which may change the picture going forward.

More broadly, it raises an interesting question: what happens to multi-factor models in a world where any investor can simply ask an AI agent to pick their top 20 stocks?

I remember when Yuval would share magnificent formulas built on rigorous accounting principles. Now AI agents seem poised to take over that task entirely...

If LLMs ever realize that factor investing is king for retail investors, things are going to get interesting. Imagine Claude realizing that Portfolio123 is the ultimate source of data for this, and pushing everyone here

Not at all. LLMs are absolutely terrible at accounting. See Don't use LLMs for accounting measures.

Also see the updated test here: Finance Agent v1.1. This is primarily for simple retrieval and calculations tasks that have little to do with accounting. Note: "We have created a benchmark that tests the ability of agents to perform tasks expected of an entry-level financial analyst."

I simply cannot imagine a world where an LLM can come up with the kinds of factors I use, some of which require over 600 characters. In fact, the FactSet-based ranking system I use, with 340 factors, probably has only a dozen or two simple P123 factors like SI%ShsOut or CostGGr%TTM. The rest are all very complicated. It looks something like this:

And there are a whole bunch of custom formulas in there too.

I may be wrong, but I believe that that's not how LLMs work. They are not programmed for those kinds of assertions. It's a nice thought, but rest assured that we hardworking users are not about to get crowded out because of Claude. (And don't get me wrong: I use Claude practically every day.)

Yeah, it was a bit of a wild friday-night speculation ![]()

There’s also a little bit of extra hope in that the vast majority of investing-related training data for LLMs is horrible IMO. (As in: most online text with investment advice is useless). We should be safe for a while.

Edit: (Though we should expect agents to start making accounts here at some point)

LLMs mey be weak at accounting reasonning, but they are good at learning established formulas and modifying.

Gemini 3.1 is currently the best at generating correct syntax and almost never makes mistakes with my prompts. Sonnet 4.6 sometimez introduces small errors, and I usually need to re-prompt 2–3 times.

Below is an example of a 10-factor ranking model generated with Gemini. The prompt still needs improvement (e.g., infering the proportion of N/A values for each factor), but most of the the factors themselves are reasonable. With prompt modification, the model could generate more sophisticated formulas.

I also asked Gemini to generate 50 formulas and it made no mistakes. This tool may therefore be very useful for factor generation, with the final selection done through judgmental analysis and historical backtesting.

Claude helped me produce a Hidden Markov Model for market timing using hmmlearn.

Retail investors who do not code all that well can now use Hidden Markov Models. It is “impressive how straightforward it has become with AI”. No doubt about it.

Imagine the number of iterations for an AI to come up with a sophisticated ranking system of 3-4 variable per formula with 100 formulas. Assuming 1000 variables to choose from and 10 weights to choose from:

(1000 variables^4 x 10 weights)^100 formulas = 10^1300 tests.

This is assuming of course testing every combination through brute force. Anyways, would take every computer on a septillion earths together working for more than a googol years.

Good point and absolutely true!! But humans can be even slower by themselves. LLMs can help speed things up when partnered with a human.

You have to tell the LLM what to do and make it be efficient with the computer resources available. But if the human is smart a lot can get done.

As an example outside of investing, the matrix multiplication requiring all those GPUs–or in Google’s case TPUs–used in the Markov Chain that is behind present-day Google web searches simply could not be done by a human alone.

Just ask Yahoo how keeping humans in the loop for web searches worked out for them.

It would literally take me until the end of the universe to calculate eigenvalue and eigenvectors for one search on Google without modern tools. Hmmm then there would be the sort.

How are you all using Claude to write P123 formulas? Are you linking it directly to P123 somehow? Are you feeding it backtests, factors etc. through excel files? Probably dumb questions but I am trying to get up to speed here.

I provide this content from P123 syntax: Entire Portfolio123 Syntax in ONE FILE + more examples + my prompt.

This would be unsupervised way of writing formulas.

You can also provide historical data so Claude can perform backtest internally, and then decide which formulas are the best and provide weights, etc..

Charles, if you not hooked into the API yet and want to use it, Claude can easily do that for you.

Do you need a new API key every time you use Claude? Pretty new to the whole API thing too. Lol