All,

The free JASP download keeps updating with newer versions. Download here: JASP

With the most recent version you can now easily save a trained machine learning model for predictions. You can train a Boosting, Decision Tree, K-Nearest Neighbors, Neural Network, Random Forest, Regularized Linear, or Support Vector Machine model. And you can do it with regression or classification.

In addition, you can now do clustering with machine learning. And, you are able do classic factor analysis (as well as PCA). I have developed a P123 model with factor analysis that is doing well out-of-sample. My out-of-sample test results are a P123 port on auto rebalance so I have not messed this up. I will probably fund this at some time (probably in April) if it continues to do well.

I am not sure that this will work with the huge data sets using P123’s API. And of course, I look forward to seeing what P123 does with AI.

But I ran some classification models on ETF excess returns (pricing data actually from YAHOO!). Specifically, I used the 3 month excess returns for several ETFs as predictors. Here excess returns are defined as the excess returns compared the the median return of all of the ETFs in the study. This is not a huge file.

I ran this as a classification model with the target being whether the ETF performed better or worse than the median ETF in the study over the next month.

Using Boosting in JASP the test sample gave a balanced accuracy of 0.617 for TLT.

[color=firebrick]CAUTION: there is a huge multiple comparison problem with what I have done with TLT here. Or just call it cherry-picking. I have heeded this caution myself and I am not using this model yet!!![/color]

[color=firebrick]So this is a proof-of-concept AT BEST.[/color] With careful use of test samples and consideration of the multiple comparison problem this might be useful in the future. And if not for me, maybe someone reading this may find it useful.

Future studies: Better statistics keeping the multiple comparison problem in mind, for example, and [color=firebrick]adding FED data (e.g., 10-year minus 2-year bond yields and/or CPI as a predictors).[/color]

BTW, always use balanced accuracy as your metric for classification models. And Brier scores if you get a probability output for a classification problem in Python.

Most people trained in Python will want to confirm the results in Python at a minimum. And a model can probably be improved upon in Python. But the ease of saving a model (without importing Pickle), the menu driven nature of JASP as well as uploads of CSV files (which is also easy in Python) makes this attractive. One might even come back to JASP after running it in Python.

Also I have just started doing this and I expect to find some things I do not like about JASP. I may have to wait for the next version or the one after before I actually use it (although I already have used factor analysis as I said above) and I do not plan to stop using Python, ever. So if you say it is not useful after looking at it closely, I may end up agreeing.

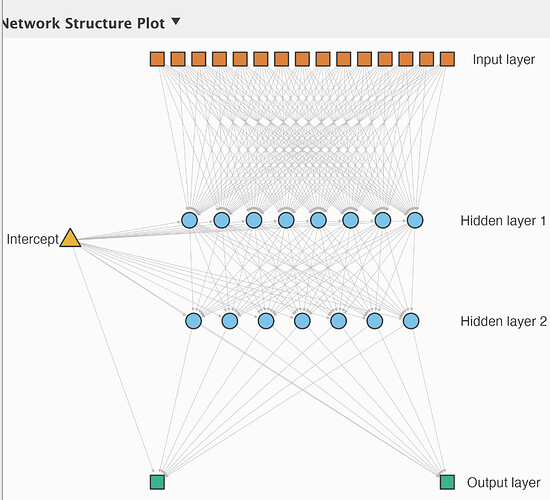

Edit: So, just in the kind-of-cool-graphics category here is a neural-net architecture I trained with JASP. To be sure I am not convinced this a great architecture and for sure I would have started with more layers (and used drop-out). But I am going to stick with my original impression that “this is cool” whether it actually works or not. Just because of how simple it was and how little time it took if nothing else.

Best,

Jim