Lets us download Predictor Pickle files for further analysis. Also, please let us upload locally trained models as a pickle file to use for back tests and live trading, that would make life much easier for many people here.

@marco I also find this VERY confusing. when using a AI Factor predictor live that was trained using 2-fold CV, what exactly is the training period? is it using the model trained on the first fold, or the more recent fold? is it both? if both, how is it combining the two training periods? is it with or without the hold-out period?

note that this question is for when we use the predictor live. not in the validation period.

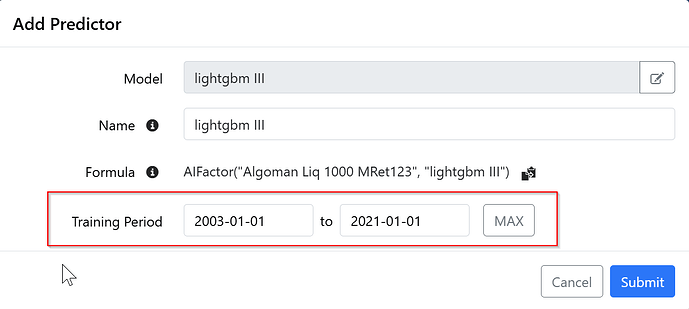

It uses the training period you set when creating the predictor. You are not using training data from validation.

If you want a predictor with the freshest data this is what you need to do (it's ![]() workflow which is why we need an overhaul):

workflow which is why we need an overhaul):

Example: you reference a predictor this formula: AIFactor("MyAi", "extra trees II")

- Copy your AI Factor (click the three bars and do a Save As). This creates a new AI Factor called "MyAi - Copy".

- Only select Predictors in the next page

- Load a new dataset as far as it lets you

- Train your "extra trees II" predictor

- Rename the AI Factor "MyAi" to "MyAi OLD"

- Rename the AI Factor "MyAi - Copy" to "MyAi"

When you rebalance you will now use the new predictor.

Should be easy

This is where it gets tricky. The features better line up exactly. But I suppose it's all doable. We are also planning to allow you to reference external datasets, so it makes total sense.

Thanks

This would be very useful.

okay thanks @marco that’s one heck of a workaround.

This has come up before, but it’s still creating unnecessary noise in model training—Sector and Industry should default to char, not numeric. Right now, LightGBM treats numeric encodings as ordinal, which leads to arbitrary splits (e.g., Sector 10 > Sector 3) that carry no economic meaning. Those splits look valid to the model but are effectively injecting noise into the tree structure, especially given how heavily sector/industry show up as top features in practice .

Setting these to categorical (char) forces the model to evaluate them correctly—as discrete groupings—so splits become economically interpretable (e.g., software vs. energy) rather than numerically arbitrary. This is a small change, but it directly improves signal quality and reduces the risk of misleading feature importance in AI factors.

Yes, there's no support for categorical atm. Trying to decide if we should add support for it now or as part of the next major version. We have not had a lot of users asking for it. Or maybe they don't realize what's happening.

Thanks

This has broad interest.

Summary of the feature request: excess returns relative to the universe as a target.

yes I ran into that when I first started using AI factors and wondered why there wasn't equal weight of the universe as benchmark…

then I forgot to do the feature request. thank you for the upvote.

Isn’t that already possible? Just used it yesterday with FRank(). If you get a lookforward error just add a term +Future%Chg(n)*0 with n being your desired period which you also use within the target formula.

Point 2) remains

Allow to train on a subset of data when training with GAM Models, like 200k of rows or so. It is not necessary to train with full dataset with GAM, it's just slow and uses memory.

unfortunately not possible today. your workaround doesn't actually forward lag the FRank(). maybe my request wasn't clear. I'd like to train on custom metrics such as FRank() but forward lagged so it's useful in AI factors. currently nested loops aren't supported so this isn't possible.