Thanks to Stephen for doing the test run.

What this script does:

Most users are familiar with the Ranking System Performance test feature which outputs the returns by ‘bucket’ for a ranking system over a specified time period for a selected universe of stocks. This script reads a spreadsheet with hundreds of factors or formulas and then automatically runs ranking system performance tests for each of them and writes the results to a file. Those results can then be pasted into a ‘scoring’ sheet which also has graphing capabilities. The results for each factor are scored based on the slope and correlation of the bucket and its average returns and also a few other things. Users can add other scoring methods if needed. This Excel scoring spreadsheet can be found in the project folder for users to download.

The key setting is the universe. Some possibilities are to run tests with the universe set to:

- The entire investable universe. Keep in mind that many factors perform very differently for small caps vs large caps.

- The general universe that you plan to invest in. For example, small caps.

- Split your general universe into 2 or more universes. Then run the factor tests for each universe. Look for factors that did well in both as that is an indicator that the factor is robust.

- Use a narrow universe to test factors for a certain sector or industry. For example, you can see which factors work well for banking stocks. But keep in mind that a very small universe is likely to give unreliable results.

- Create a universe with filters so that it contains only growth stocks. Then run the factor tests to which factors are good compliments for a universe of growth stocks.

Dates are also important. You may want to run the factor tests for certain date ranges and then look to see which factors have done well in all periods. Or which are doing well in the current period. For example, most value factors did very well in the 2000’s but not very well in the last 5 years.

How to use it:

Create a Google account if you do not already have one.

Go to Account Settings, DataMiner & API on the P123 site to get your API Id and Key. If you do not have one yet, then click Create Key on that page.

Open the shared project folder: https://drive.google.com/drive/folders/10P3ZnGVOFQeCjpXx_oFuNHhAprQ8X3Df?usp=sharing

Follow the instructions in the Introduction section of the SingleFactorTests.ipynb file.

Choose the factors/formulas you want to test from the spreadsheet provided or add your own factors. Be aware that your account is allocated a certain number of API credits depending on your subscription level. But you can purchase additional credits for a very reasonable price. Each factor/formula test will cost 2 API credits. Be aware of your API credit balance before running a set of tests because the script does not currently handle the case where you run out of credits while a set of tests is running. If that happens, the script will stop and not write the results to a file and you will have wasted some credits.

Determine the settings you want to use for the universe, date range, frequency, etc.

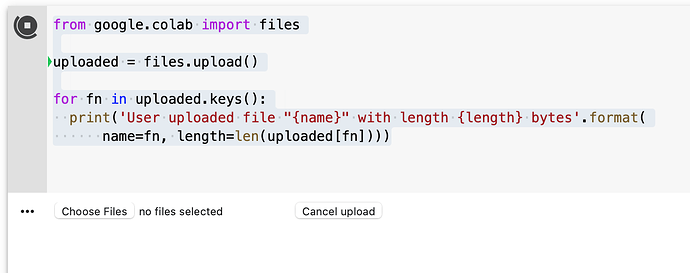

Run the steps in the Initialization section of the Colab Notebook.

Run the code in the Single Factor Script section.

Locate the results file on your Google Drive. Open the file and copy the results data.

Download the Excel scoring spreadsheet (ScoredFactors_Template.xls) from the project folder to your hard drive. Delete the existing data and paste your results into the Data tab.