Jim, I’ve recently been working with screen of screens, and the interplay you mention is something I’ve noticed as I work with those screens.

An example might be:

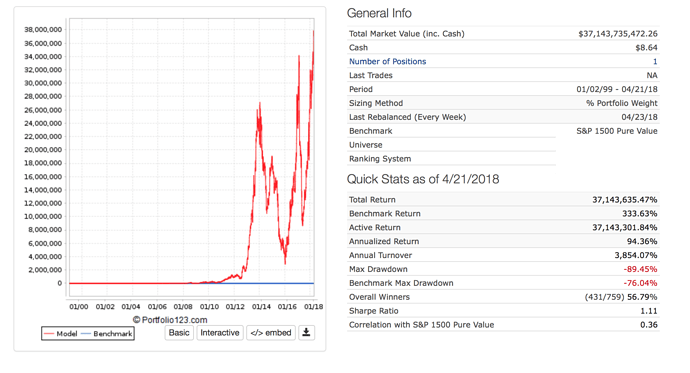

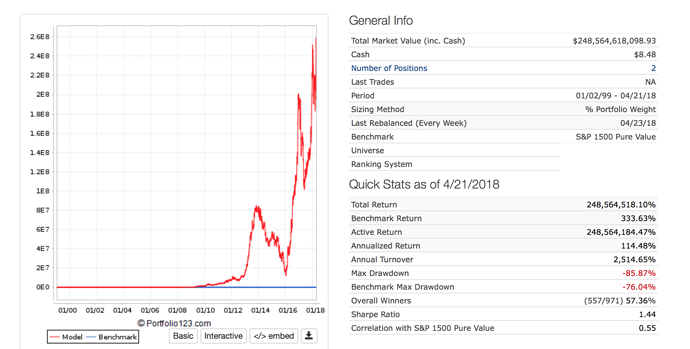

Lets say I have 3 or 4 models that work decently and I want to take the very best stocks from each. I’m finding usually if I put those 3 or 4 models together in a screen of screens and select only the top ranked stock from each - well, that’s usually not a very good result - I’m guessing because the volatility is so high (almost always is) and this formula shows how the volatility will bring down compound returns. If I take the top 2 stocks from each model, that’s usually better, but still probably not optimal, and again it’s probably not because there’s something wrong with the top 1-2 ranked stocks, but is a follow-on effect of high volatility. What I’m finding is if I combine the top 3 or 4 stocks from each model, however, that’s usually where I see strongest results. With an average of 8-12 stocks the volatility tends to come down enough so that it creates more benefits that more than offset the decision to select lower ranking stocks.

It’s not an intuitive dynamic (to me anyway), but I think it’s something anyone working with models will run into. At first it’s confusing, because I was testing models and wondering why my top ranked stocks in isolation don’t perform so well - so I’d try to exclude them and compare results, but it didn’t help and usually would hurt results. Ultimately, it’s not a problem with the top ranked stocks being abnormal, it’s more that the effects high volatility are difficult to recover from (although thinking this way might provide ideas about how to take advantage of that volatility via timed trades?), and there’s a benefit to lowering volatility even if means picking more lower ranking stocks. Obviously there’s a balance, but it’s not intuitive at all to me - and I come to it after experimenting with many backtests and head-scratchings. ![]() This provides a goof framework for thinking about it.

This provides a goof framework for thinking about it.