It’s just a fact that feature requests like this often go unanswered.

Other examples include early stopping in XGBoost or the ability to adjust the gap size at the front or back of k-fold cross-validation. There’s no theoretical or practical reason these should be treated the same, yet these kinds of requests often don’t gain traction.

Historically—and still today—there have been many requests and only limited resources. P123 has had no choice but to prioritize, which is completely understandable.

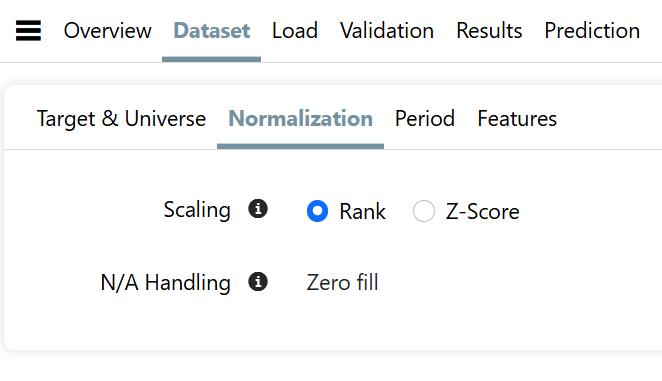

Just a guess, but I’d wager P123 hasn’t yet looked into LightGBM’s XENDCG ranking method or made any progress on a more advanced handling of missing values (NAs), which has been raised before—let alone decided where either might fall in the long list of priorities. And again—just speculating—it’s probably well behind bigger initiatives like adding support for Asian stocks or refining the feature selection process in the AI/ML module. Honestly, I might make the same call. I’m not here to debate priorities.

Personally, I’ve addressed this by downloading the data. Once I have it, I can do whatever I want—no need to wait or ask permission.

The only real downside is that testing new features this way is slower and more tedious than using P123’s integrated AI/ML tools.

At one point, P123 experimented with Python execution in Colab, allowing users to run code while still honoring FactSet data restrictions. That was a smart and creative solution—limited at the time, but perhaps worth revisiting now with improvements.

Today, with LLMs increasingly able to run code natively—often without even needing Colab—it might be the perfect time to explore API integration with tools like ChatGPT or Gemini 2.5. An LLM could run lightweight code, or securely offload it to AWS or another cloud service in a sandboxed environment.

Just to be clear: I’m not unhappy with the current platform. Far from it—I’m genuinely impressed and finding it incredibly useful. But I’m not using the AI/ML module, and it seems like some who are do run into frustration.

There’s also a related issue: many of the most advanced users have professional or near-professional methods they prefer not to share publicly. They’re understandably reluctant to reveal those methods just to justify a feature request on the forum. A secure, sandboxed coding environment would allow them to implement and test their ideas privately—without needing to explain or defend their methods.

This post is intended purely as a constructive suggestion. In the meantime, I’ll continue downloading data and building models locally. But when it comes to specific feature requests for the AI/ML module, continuing to ask often feels counterproductive—for everyone, including P123.

That said, I do feel I now have a good degree of freedom to pursue my own methods, and I genuinely thank P123 for that. My only point is that maybe this freedom can be expanded even further.

And thank you, Hbee, for sharing your methods in the forum—I might give your approach a try via downloads.

As a final thought: it’s hard to imagine there aren’t a number of hedge fund managers or institutional users with serious coding skills—or dedicated quant teams—who would value the flexibility to build directly within the platform. Instead of relying on P123 to build every feature request, they could implement and test advanced methods themselves. People like Chaikin may have once needed custom development, but with today’s cloud tools and secure APIs, that level of dependency may no longer be necessary.

Offering this kind of power—without the retail discount—could make P123 even more attractive to enterprise clients, while letting individual users opt in at their own pace.

To some degree, I’m simply pointing out that P123 played a key role in building Chaikin’s original strategy—one that went on to generate millions. They could do more of that by enabling this kind of innovation, increasing revenue through volume, or finding new ways to better monetize what they’re already doing for enterprise clients—especially given the increased demand this kind of flexibility would likely create.

Lastly, I know there’s been discussion about licensing algorithms within P123. The obvious challenges—NDAs, IP protection, and privacy—have always made that difficult to implement. But maybe it’s time to revisit the idea in a new way:

Imagine a secure environment where users can privately publish algorithms, and other members can securely integrate them with their own custom features or models—without exposing any proprietary methods.

Think of “Bob’s Random Forest on Steroids” meeting “Jane’s Subtle Feature Interactions.” Jane might have brilliant feature engineering but weak modeling skills. Bob, on the other hand, has exceptional modeling capabilities but no domain expertise. They could collaborate—or simply license and combine elements—while revealing only what they choose to share.

True collaboration: private, secure, and scalable—with complete control over proprietary methods.