TL;DR: I went back to using raw excess returns as a target.

@dnevin123 got me thinking about target normalization in this post: AI Factor - Recreating Linear Model Predictions

Specifically, I wondered whether using z-scores normalized by date for excess returns as a target was superior to raw excess returns.

I ran 100 models with random selection of the variables used for each model and compared the out-of-sample performance for each model using the 2 targets.

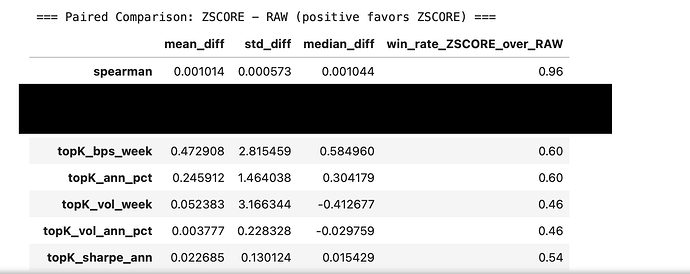

To the far right is a count of which model gave the best out-of-sample returns (out of 100 runs). The rest is the average difference in the magnitude for various metrics. Z-score normalization by date of excess returns clearly won but there was little practical significance (higher vol is worse):

So with respect to the Sharpe ratio for example. Using daily z-score normalization for a 15 stock screen gave a better performing model just 54% of the time and the average difference in the Sharpe Ratio was just 0.023 (a practically insignificant difference)/

For returns a 15 stock screen using daily z-score normalization gave better out of sample result 60% of the time but the average annualized difference in returns was just 0.26%

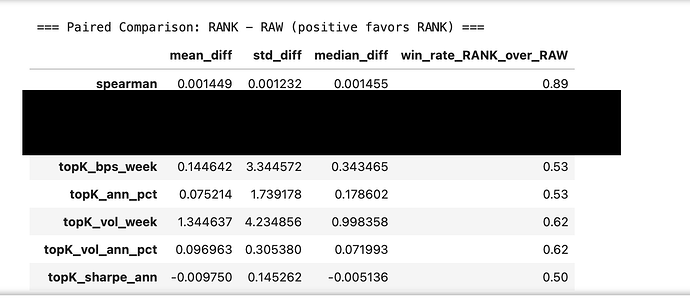

Rank vs raw excess returns was similarly unimpressive (higher vol is worse):